Preventing Data Mining Bias In Futures Strategy Development

Stop curve-fitting your backtests and learn how to prevent data mining bias in futures strategy development using out-of-sample testing and statistical tests.

Data mining bias in futures strategy development happens when traders test so many parameter combinations that they find patterns fitting historical noise rather than real market behavior. Preventing data mining bias requires out-of-sample testing, limiting parameter counts, applying statistical corrections for multiple comparisons, and validating strategies across different market conditions before risking real capital.

Key Takeaways

- Testing 1,000 parameter combinations on the same dataset virtually guarantees finding at least one "profitable" strategy by pure chance

- Out-of-sample testing on data your strategy has never seen is the single most effective defense against data mining bias

- The Bonferroni correction and White's Reality Check are two statistical methods that adjust for multiple comparisons in backtesting

- Strategies with fewer than 5-6 free parameters are far less likely to be overfit than complex systems with 10+ inputs

- A strategy that works across multiple instruments, timeframes, and market regimes has a much higher probability of being genuine

Table of Contents

- What Is Data Mining Bias in Futures Trading?

- How Does Data Mining Bias Creep Into Strategy Development?

- Statistical Tests to Detect False Discoveries

- Practical Methods to Prevent Data Mining Bias

- Robustness Testing: Stress-Testing Your Strategy

- Common Mistakes That Amplify Data Mining Bias

- Frequently Asked Questions

- Conclusion

What Is Data Mining Bias in Futures Trading?

Data mining bias occurs when a trader or algorithm searches through enough parameter combinations, indicators, or rule sets that statistically significant-looking results emerge from random noise in historical data. The strategy appears profitable in backtesting but has no predictive power going forward. This is one of the most common and costly pitfalls in futures strategy development.

Data Mining Bias: The statistical tendency to find spurious patterns when testing a large number of hypotheses on the same dataset. In futures trading, it means your backtest results may reflect curve-fitting to past noise rather than a genuine market edge.

Here's the math that makes this dangerous. If you test a single strategy on historical data and it shows a p-value of 0.05, there's a 5% chance the result is random. But if you test 100 variations, you'd expect about 5 of them to pass that same threshold by pure luck. Test 1,000 combinations and you'll find dozens of "winning" strategies that are completely meaningless.

This problem is especially acute in futures markets. ES, NQ, GC, and CL all have rich historical data stretching back years, which gives traders plenty of room to unknowingly mine for patterns. The more data you have, the more tempting it becomes to keep searching until something "works." According to research by Marcos López de Prado, author of Advances in Financial Machine Learning, the majority of published backtested strategies suffer from some form of data mining bias [1].

How Does Data Mining Bias Creep Into Strategy Development?

Data mining bias enters your strategy development process through repeated testing, parameter tweaking, and selection of the best-performing variation from many trials. It rarely happens all at once. Instead, it accumulates through small, reasonable-seeming decisions that collectively destroy the statistical validity of your results.

Consider a typical workflow. You start with a moving average crossover idea for NQ futures. You test 10 different fast-period lengths, 10 slow-period lengths, and 5 different ATR multipliers for stops. That's 500 combinations. You pick the best one, which shows a Sharpe ratio of 1.8 and a profit factor of 2.1. Those performance metrics look great on paper. But you've effectively run 500 hypothesis tests and selected the winner. The probability that your top result is genuine has dropped dramatically.

Overfitting: Building a strategy so precisely tuned to historical data that it captures noise rather than signal. An overfit strategy performs well in backtesting but poorly in live trading because the noise patterns it learned don't repeat.

The bias also compounds when you don't track how many combinations you've actually tried. Most traders don't keep a log of rejected strategies. They remember the final version that "worked" and forget the 50 variations they discarded. This selective memory makes the problem invisible.

Another subtle source: peeking at out-of-sample data. If you test on a holdout period, see poor results, then go back and adjust parameters before testing that same holdout period again, you've contaminated your validation data. The out-of-sample set has effectively become in-sample.

Statistical Tests to Detect False Discoveries

Several statistical corrections exist to account for the multiple comparisons problem in backtesting futures strategies. These methods adjust your confidence thresholds based on how many tests you've actually run, giving you a more honest assessment of whether a result is real.

False Discovery: A backtest result that appears statistically significant but is actually the product of random chance amplified by multiple testing. In strategy development, false discoveries lead to deploying strategies with no real edge.

Bonferroni Correction

The simplest approach. Divide your significance level by the number of tests. If you normally require p < 0.05 and you tested 100 parameter combinations, your new threshold becomes p < 0.0005. This is conservative, which means it may reject some genuine strategies, but it dramatically reduces false positives. For traders who prioritize avoiding bad strategies over finding every possible edge, the Bonferroni correction is a reasonable starting point.

White's Reality Check and Hansen's SPA Test

White's Reality Check (2000) tests whether the best strategy from a set of candidates genuinely outperforms a benchmark after accounting for data snooping [2]. Hansen's Superior Predictive Ability (SPA) test refines this by being less sensitive to poor-performing strategies in the comparison set. Both require some statistical programming but are well-suited for systematic futures strategy development.

Deflated Sharpe Ratio

Developed by Bailey and López de Prado, the Deflated Sharpe Ratio adjusts a strategy's Sharpe ratio for the number of trials conducted, the skewness and kurtosis of returns, and the length of the sample size [3]. A strategy with a Sharpe ratio of 1.5 tested among 200 alternatives may have a deflated Sharpe ratio near zero, meaning the result is likely noise. This metric directly addresses data mining bias in futures strategy development prevention by putting a number on how much your search process inflated results.

Practical Methods to Prevent Data Mining Bias

Prevention works better than detection. The most effective approach combines disciplined research design with structural safeguards that limit your ability to overfit, even accidentally.

1. Start with an Economic Rationale

Before testing any parameter, write down why you expect the strategy to work. "Moving average crossovers on ES might capture momentum because institutional order flow creates trends that persist over 5-20 minute windows" is a testable hypothesis. "I wonder what happens if I combine RSI with Bollinger Bands and a volume filter" is a fishing expedition. Strategies grounded in market microstructure, behavioral patterns, or structural features (like futures contract rollovers) are less likely to be data-mined artifacts.

2. Limit Free Parameters

Every adjustable parameter in your strategy is a degree of freedom that increases overfitting risk. A strategy with 3 parameters tested across a reasonable range is far more trustworthy than one with 12 parameters that's been optimized to the tick. As a rough guideline, keep free parameters under 5-6 for intraday futures strategies. If you're doing backtesting of automated futures strategies, track parameter count as a first-pass filter.

3. Use Proper Out-of-Sample Testing

Reserve at least 30% of your historical data as a true holdout set. Develop and optimize your strategy on the in-sample portion only. When you're done, test it once on the out-of-sample data. Once. If you go back and adjust after seeing out-of-sample results, you've burned that data. Some traders use a three-way split: training data (50%), validation data (20%), and a final test set (30%) they never touch until deployment.

Out-of-Sample Testing: Evaluating a strategy on historical data that was not used during development or parameter optimization. This simulates how the strategy would have performed on "unseen" data and is the primary defense against overfitting.

4. Walk-Forward Analysis

Walk-forward analysis automates the in-sample/out-of-sample process across rolling windows. You optimize on months 1-6, test on month 7. Then optimize on months 2-7, test on month 8. And so on. The concatenated out-of-sample results give you a more realistic picture of how the strategy adapts to changing market conditions. This approach is particularly useful for strategy optimization in futures because it tests adaptation without overfitting.

5. Cross-Instrument Validation

If your mean reversion strategy works on ES, does it also work on NQ? What about GC or CL? A pattern that only appears in one instrument and one timeframe is more likely to be noise. Genuine market inefficiencies tend to show up across related instruments, though the magnitude may differ. Testing across the instruments covered in the futures instrument automation guide provides a practical cross-validation check.

Robustness Testing: Stress-Testing Your Strategy

Robustness testing pushes your strategy beyond normal conditions to see if it breaks. A strategy that only works with exact parameter values or during specific market regimes is fragile and likely overfit. Robust strategies degrade gracefully when conditions change.

Parameter Sensitivity Analysis

Vary each parameter by ±10-20% from its optimized value. If your strategy uses a 14-period RSI and performance collapses at 12 or 16, that's a red flag. Robust strategies show a "plateau" of profitability across a range of parameter values rather than a sharp peak at one specific setting. When the performance surface looks like a mountain peak instead of a mesa, you're likely looking at overfitting rather than a real edge.

Monte Carlo Simulation

Randomly shuffle the order of your trades (or slightly perturb entry/exit prices by 1-2 ticks) and re-run the backtest thousands of times. If your strategy's Sharpe ratio and profit factor remain stable across most permutations, the results are less likely to depend on a lucky sequence of trades. Monte Carlo simulation also provides confidence intervals for drawdown, which is more useful than a single maximum drawdown number.

Regime Testing

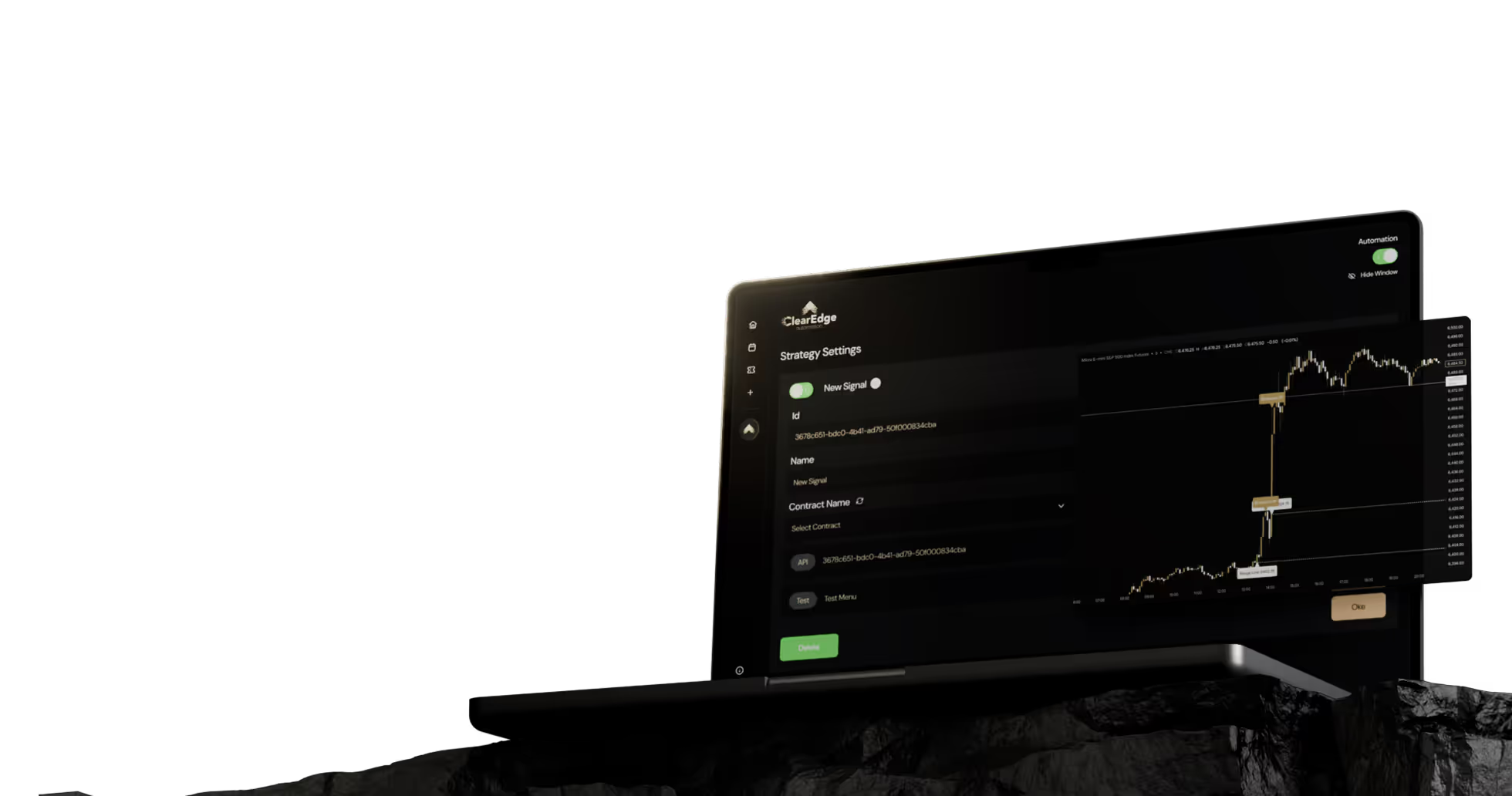

Test your strategy separately across different market regimes: trending vs. range-bound, low-volatility vs. high-volatility, pre-FOMC vs. post-FOMC. A strategy doesn't need to be profitable in every regime, but you should understand where it works and where it doesn't. If you're automating on a platform like ClearEdge Trading, you can build regime filters into your TradingView alerts to pause execution during unfavorable conditions.

Robustness Testing: A set of techniques that stress-test a strategy by modifying parameters, reshuffling trades, and testing across varied conditions. A robust strategy maintains reasonable performance metrics under perturbation, while a fragile one collapses.

Common Mistakes That Amplify Data Mining Bias

Not logging rejected strategies. If you've tested 200 variations and kept 1, that context matters statistically. Keep a research journal. Record every test, not just the winners. This practice directly supports data mining bias futures strategy development prevention by making your search process transparent.

Optimizing on the entire dataset. Using all available historical data for both development and evaluation leaves zero protection against overfitting. Always hold out data. For futures markets with 24-hour trading, even splitting by session (RTH vs. ETH) can provide a crude form of out-of-sample validation.

Adding indicators until it "works." Layering RSI + MACD + Bollinger Bands + volume profile + VWAP gives you so many degrees of freedom that you can fit almost any historical dataset. Each indicator you add should have a clear, independent reason for inclusion. If you can't explain why an indicator improves the strategy mechanistically, it's probably just adding noise.

Ignoring transaction costs. Many data-mined strategies produce small per-trade edges that disappear once you account for commissions, slippage, and the bid-ask spread. For ES futures, realistic round-trip costs run $4-6 per contract including slippage. For CL with its $10 tick value, slippage can be higher during fast markets. Always include realistic costs in your slippage and execution cost estimates.

Frequently Asked Questions

1. How many backtest trials make data mining bias a serious concern?

There's no exact threshold, but testing more than 20-30 parameter combinations on the same dataset without statistical correction significantly increases false discovery risk. The more trials you run, the more aggressive your correction needs to be.

2. Can Pine Script strategy development cause data mining bias?

Yes. Pine Script makes it easy to rapidly test dozens of indicator combinations and parameter sets in TradingView's strategy tester. The convenience of rapid iteration increases the risk of mining for results unless you enforce out-of-sample discipline.

3. What sample size do I need for reliable backtest results?

A minimum of 100-200 trades in the out-of-sample period is a common guideline for statistical reliability. Fewer trades make it difficult to distinguish skill from luck, especially for strategies with moderate win rates.

4. Does walk-forward analysis eliminate data mining bias completely?

No, but it substantially reduces it. Walk-forward analysis can still be gamed if you keep adjusting the walk-forward window or re-optimization frequency until you find a version that performs well.

5. What Sharpe ratio should I expect after correcting for data mining bias?

Many strategies with in-sample Sharpe ratios of 1.5-2.0 drop to 0.5-0.8 after proper out-of-sample testing and multiple comparison corrections. If your corrected Sharpe ratio stays above 0.5, that's worth investigating further.

6. How does automation help prevent data mining bias?

Automation itself doesn't prevent data mining bias during development, but it does enforce consistency once you deploy a validated strategy. Platforms that execute predefined rules, like those discussed in the algorithmic trading guide, remove the temptation to manually override a strategy based on recent results.

Conclusion

Data mining bias futures strategy development prevention comes down to honest research practices: start with a hypothesis, limit your parameters, split your data properly, and apply statistical corrections for the number of tests you've run. No single technique eliminates the problem, but combining out-of-sample testing, walk-forward analysis, and robustness checks gives you a realistic assessment of whether your strategy has a genuine edge.

Before deploying any backtested strategy with real capital, paper trade it for at least 30-60 days to gather live out-of-sample data. If performance holds up, scale in gradually. If it doesn't, the strategy likely reflected historical noise rather than a repeatable pattern.

Want to dig deeper? Read our complete guide to backtesting automated futures strategies for detailed setup instructions and validation frameworks.

References

- López de Prado, M. (2018). Advances in Financial Machine Learning. Wiley. Publisher link

- White, H. (2000). "A Reality Check for Data Snooping." Econometrica, 68(5), 1097-1126. https://doi.org/10.1111/1468-0262.00152

- Bailey, D.H. & López de Prado, M. (2014). "The Deflated Sharpe Ratio." Journal of Portfolio Management, 40(5), 94-107. https://doi.org/10.3905/jpm.2014.40.5.094

- CME Group - Introduction to Futures

Disclaimer: This article is for educational purposes only. It is not trading advice. ClearEdge Trading executes trades based on your rules; it does not provide signals or recommendations.

Risk Warning: Futures trading involves substantial risk. You could lose more than your initial investment. Past performance does not guarantee future results. Only trade with capital you can afford to lose.

CFTC RULE 4.41: Hypothetical results have limitations and do not represent actual trading. Simulated results may over- or under-compensate for market factors such as lack of liquidity.

By: ClearEdge Trading Team | 29+ Years CME Floor Trading Experience | About Us

Steal the PlaybooksOther TradersDon’t Share

Every week, we break down real strategies from traders with 100+ years of combined experience, so you can skip the line and trade without emotion.