Out Of Sample Testing: Validating Your Futures Trading Strategy

Stop trading backtest illusions by using out of sample testing and walk-forward analysis to prove if your futures strategy is truly robust or just overfitted.

Out of sample testing validates a futures trading strategy by evaluating it on data the strategy has never seen during development. This process splits historical data into separate segments—one for building the strategy (in-sample) and one for testing it (out-of-sample)—to measure whether performance holds up beyond the original dataset. Without out of sample validation, traders risk deploying curve-fitted strategies that fail in live markets.

Key Takeaways

- Out of sample testing uses data excluded from strategy development to check whether backtest results are real or artifacts of overfitting

- A common data split is 70% in-sample for development and 30% out-of-sample for validation, though ratios vary by strategy type and available data

- Strategies that lose more than 30-50% of their in-sample Sharpe ratio during out of sample testing often indicate overfitting or data mining bias

- Walk-forward analysis automates repeated out of sample tests across rolling windows, providing a more thorough robustness check than a single split

- Paper trading or forward testing on live data is the final out of sample validation step before committing real capital

Table of Contents

- What Is Out of Sample Testing?

- Why Does Out of Sample Testing Matter for Futures Strategies?

- How to Split Your Data for Strategy Validation

- Walk-Forward Analysis: Repeated Out of Sample Testing

- Which Performance Metrics Should You Track?

- Common Mistakes in Out of Sample Validation

- Moving from Out of Sample Results to Live Trading

- Frequently Asked Questions

What Is Out of Sample Testing?

Out of sample (OOS) testing evaluates a trading strategy on historical data that was deliberately withheld during the development and optimization process. The idea is straightforward: if a strategy only works on the data it was built on, it probably won't work on new data either. And live markets are always new data.

Out of Sample Testing: A strategy validation method where performance is measured on data the strategy was not trained or optimized on. It separates "learning" data from "testing" data to detect overfitting.

When you develop a futures strategy—whether in Pine Script, Python, or any other language—you're making decisions about parameters based on what you see in historical price action. Moving average lengths, stop distances, entry thresholds. Every parameter choice reflects the data you looked at. Out of sample testing asks a simple question: do those choices hold up on data you didn't look at?

This concept comes from statistics and machine learning, where it's standard practice to never evaluate a model on its training data. The backtesting process for futures strategies should always include this validation step. Without it, your backtest results are unreliable.

Why Does Out of Sample Testing Matter for Futures Strategies?

Out of sample testing matters because it's the primary defense against data mining bias—the tendency to find patterns in historical data that are random noise rather than real market behavior. Futures markets are noisy. Given enough parameters and enough optimization runs, you can make almost any strategy look profitable on past data.

Data Mining Bias: The statistical distortion that occurs when many parameter combinations are tested on the same dataset, increasing the probability that "winning" results are due to chance. More parameters tested means more bias.

Consider this: if you test 1,000 parameter combinations on ES futures data from 2020-2023, some of those combinations will show strong returns purely by random chance. According to research published by the CFA Institute, the probability of finding at least one statistically significant but spurious result increases rapidly with the number of trials [1]. With 1,000 trials at a 5% significance level, you'd expect roughly 50 false positives.

Out of sample testing filters these false positives. A strategy that was curve-fitted to in-sample data will typically show degraded performance—or outright losses—when applied to data it hasn't seen. A genuinely robust strategy maintains its core characteristics, even if exact performance metrics shift somewhat.

For futures traders using algorithmic approaches, this distinction is the difference between a strategy that makes money in production and one that looked great on paper but bleeds capital in live trading.

How to Split Your Data for Strategy Validation

Data splitting divides your historical dataset into at least two segments: an in-sample period for strategy development and an out-of-sample period for validation. The split ratio and method directly affect how reliable your validation results will be.

Common Data Splitting Approaches

MethodSplit RatioBest ForLimitationSimple Holdout70/30 or 60/40 (IS/OOS)Quick validation, long datasetsResults depend on which period is held outWalk-ForwardRolling windowsStrategies sensitive to regime changesMore complex to implementK-Fold (modified)Multiple rotating segmentsLimited data situationsTemporal ordering issues require careAnchored Walk-ForwardGrowing IS window, fixed OOSStrategies that benefit from more dataLater periods have more IS data, creating imbalance

The simplest approach is a single holdout. If you have 10 years of ES futures data, you might use years 1-7 for development and years 8-10 for out of sample testing. This works, but it has a weakness: your results depend heavily on what happened during those specific three years. If years 8-10 included a unique market event (like COVID in 2020), your OOS results may not be representative.

How Much Data Do You Need?

Sample size matters more than most traders realize. A general guideline: your out of sample period should contain at least 30 trades for statistical relevance, and ideally 100 or more. For a strategy that trades ES futures once per day, 30 trades means roughly six weeks of data. That's a minimum. For weekly strategies, you'd need 30 weeks of OOS data at minimum.

Sample Size: The number of trades or observations in a test period. Small sample sizes produce unreliable statistics—a strategy with 15 OOS trades doesn't tell you much regardless of its profit factor.

The data should also cover diverse market conditions. A strategy validated only during a bull trend hasn't been tested against choppy or bearish conditions. If your OOS window only includes one regime, your validation is incomplete.

Walk-Forward Analysis: Repeated Out of Sample Testing

Walk-forward analysis (WFA) runs multiple out of sample tests across sequential time windows, re-optimizing the strategy for each new period. It's the most rigorous form of out of sample validation because it tests whether a strategy's optimization process itself produces robust results over time.

Walk-Forward Analysis: A method that divides data into multiple sequential IS/OOS pairs, optimizes on each IS window, then tests on the immediately following OOS window. Results are stitched together from OOS periods only.

Here's how it works in practice. Say you have 5 years of NQ futures data:

- Window 1: Optimize on months 1-12, test on months 13-15

- Window 2: Optimize on months 4-15, test on months 16-18

- Window 3: Optimize on months 7-18, test on months 19-21

- Continue until data runs out

The combined OOS results from all windows give you a performance estimate that accounts for changing market conditions and parameter drift. Walk-forward efficiency (WFE) measures how much of the in-sample performance carries through to out of sample. A WFE above 50% is generally considered acceptable, though higher is better [2].

If you're developing strategies in TradingView and want to automate them, the TradingView backtesting and validation guide covers how to set up systematic testing workflows. Pine Script's built-in strategy tester provides basic OOS capability through date range controls, though more advanced walk-forward testing typically requires external tools like Python or dedicated backtesting platforms.

Which Performance Metrics Should You Track?

The most useful OOS metrics are those that measure risk-adjusted returns and consistency, not raw profit. A strategy that made $50,000 in OOS testing but with a maximum drawdown of $45,000 is not a viable strategy, even though the bottom line is positive.

OOS Metrics Comparison Checklist

MetricWhat It MeasuresOOS Red FlagSharpe RatioRisk-adjusted returnDrops below 0.5 or falls more than 50% from ISProfit FactorGross profit / gross lossFalls below 1.2 or drops more than 40% from ISMax DrawdownLargest peak-to-trough declineExceeds 2x the IS max drawdownWin RatePercentage of winning tradesShifts more than 10-15 percentage points from ISAverage TradeMean P&L per tradeDrops below 1 tick after commissions/slippageTrade CountNumber of OOS tradesFewer than 30 trades (insufficient sample)Sharpe Ratio: A measure of risk-adjusted return calculated as (average return minus risk-free rate) divided by the standard deviation of returns. A Sharpe ratio above 1.0 is generally considered good for futures strategies. Higher values mean better return per unit of risk.Profit Factor: Total gross profits divided by total gross losses. A profit factor of 1.0 means break-even. Most viable futures strategies show a profit factor between 1.3 and 2.5 in backtesting, with OOS values often 20-40% lower than IS.

Here's the thing about comparing IS and OOS results: some degradation is normal and expected. If your in-sample Sharpe ratio is 2.0 and your out of sample Sharpe drops to 1.4, that's reasonable. The strategy lost some edge but is still performing well. If it drops to 0.3, something is wrong—likely overfitting.

Track these metrics alongside your automated performance tracking setup to maintain a clear picture of how strategies behave across different validation periods.

Common Mistakes in Out of Sample Validation

Even traders who understand the concept of OOS testing make mistakes that undermine the process. These errors can give you false confidence in a strategy that isn't actually robust.

1. Peeking at OOS data during development. This is the most common and most damaging mistake. If you check OOS results, tweak something, then re-check, your OOS data is no longer "out of sample." It's contaminated. Every time you look at OOS results and make a change based on what you see, that data becomes part of your training set. You only get to look at OOS results once—at the end.

2. Insufficient OOS sample size. Testing on 2 weeks of data with 12 trades and declaring the strategy "validated" is not meaningful. Statistical confidence requires adequate sample sizes. As a rough rule, you need at least 30 trades in OOS to draw even preliminary conclusions, and 100+ for reasonable confidence [3].

3. Using only one OOS period. A single holdout test can be misleading. The OOS period might be unusually favorable or unfavorable. Walk-forward analysis across multiple periods provides a much more reliable picture. Think of it this way: you wouldn't judge a baseball player's ability based on one at-bat.

4. Ignoring transaction costs in OOS testing. Some traders include commissions and slippage in their IS backtest but forget to carry these settings into OOS testing. For futures like ES ($12.50/tick) or CL ($10.00/tick), even small slippage assumptions significantly impact results. Always apply the same cost assumptions—or more conservative ones—to your OOS evaluation.

For a broader view of strategy optimization best practices, including how to avoid over-optimizing before you even get to OOS testing, see our detailed optimization guide.

Moving from Out of Sample Results to Live Trading

Passing out of sample testing doesn't mean a strategy is ready for live capital. It means the strategy has cleared one validation hurdle. The next step is forward testing—running the strategy on live market data in a paper trading or simulated environment.

Validation Stages Before Going Live

- In-sample development: Build and optimize the strategy on training data

- Out of sample testing: Validate on withheld historical data

- Forward testing (paper): Run on live data without real money for 4-8 weeks minimum

- Small size live: Trade with minimal position size (e.g., 1 MES contract at $1.25/tick instead of 1 ES at $12.50/tick)

- Full deployment: Scale to target position size if all prior stages confirm performance

Forward testing catches things that historical testing cannot: real-time data feed issues, execution delays, and how you handle the psychology of watching a strategy trade. The forward testing guide for futures traders covers this phase in more detail.

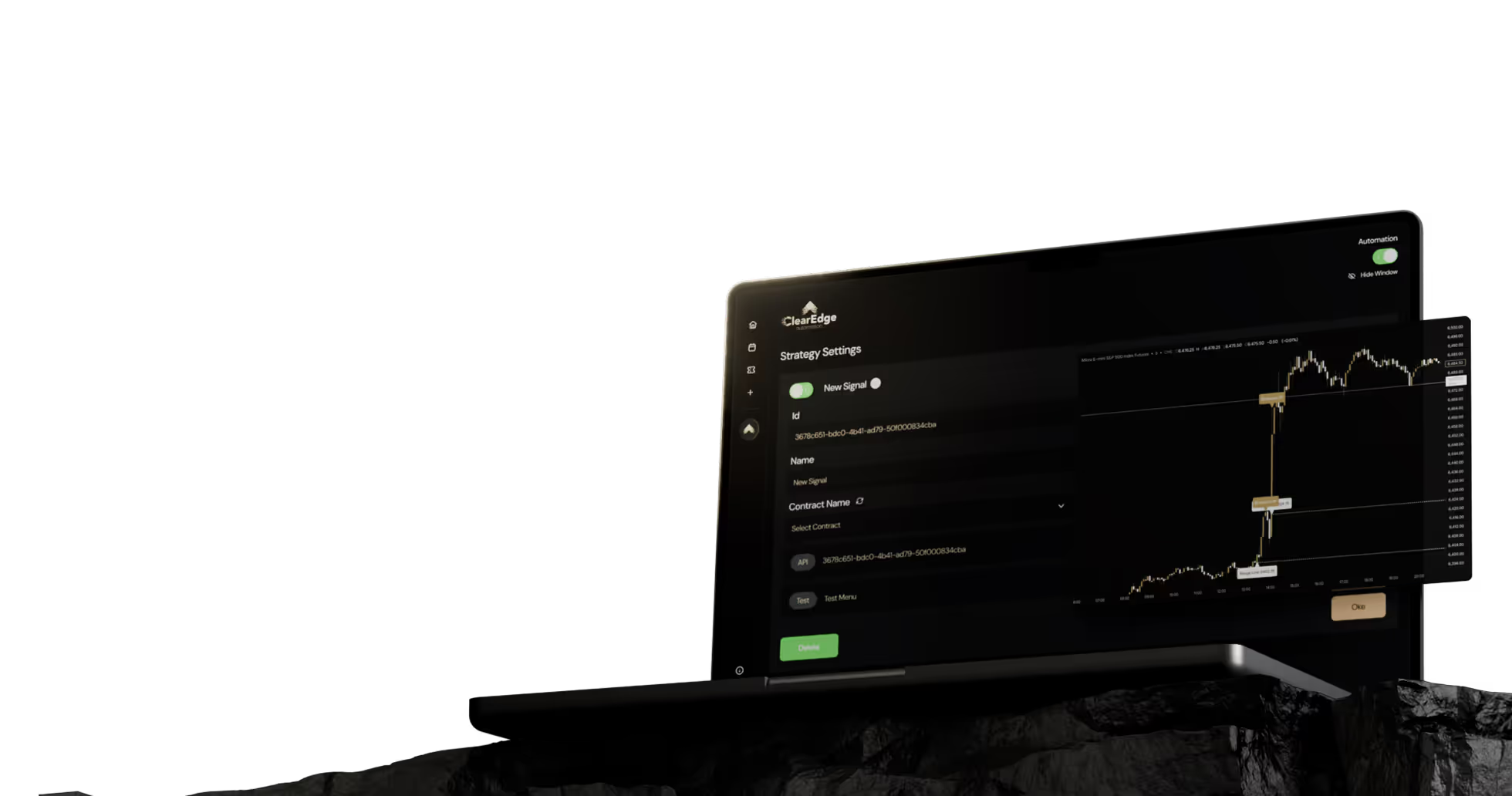

If you plan to automate execution, platforms like ClearEdge Trading connect TradingView alerts to your futures broker, handling the execution piece once your strategy has passed validation. The automation itself doesn't change your strategy's edge—it just removes manual execution delays and errors.

One important point: continue monitoring OOS-like metrics even after going live. Compare your live results against your OOS benchmarks monthly. If live performance degrades significantly below OOS expectations for an extended period (say, 3-6 months), the strategy may need re-evaluation or retirement. Strategy lifecycle management is an ongoing process, not a one-time event.

Frequently Asked Questions

1. What is the ideal ratio for in-sample vs. out of sample data?

A 70/30 split (70% in-sample, 30% out-of-sample) is a common starting point. If you have limited data, a 60/40 split gives more OOS validation at the cost of less development data.

2. Can I use walk-forward analysis in TradingView's Pine Script?

Pine Script's strategy tester doesn't natively support automated walk-forward analysis. You can manually set date ranges for sequential IS/OOS tests, but full walk-forward optimization typically requires Python or specialized backtesting software.

3. How many trades do I need in my out of sample period?

At minimum, 30 trades for preliminary validation and 100+ trades for statistically meaningful results. Fewer than 30 OOS trades makes it difficult to distinguish strategy edge from random variation.

4. What level of performance degradation from IS to OOS is acceptable?

A 20-40% drop in key metrics like Sharpe ratio or profit factor is typical and usually acceptable. Degradation beyond 50% often signals overfitting to the in-sample data.

5. Does out of sample testing guarantee a strategy will work in live trading?

No. OOS testing reduces the risk of deploying an overfitted strategy, but it doesn't account for execution issues, changing market regimes, or liquidity conditions. Forward testing on live data is a necessary additional step.

6. How often should I re-run out of sample validation on an existing strategy?

Re-validate quarterly or whenever you modify strategy parameters. Use the most recent market data as your new OOS period to check that the strategy still performs under current conditions.

Conclusion

Out of sample testing is the most practical tool futures traders have for separating genuine strategy edge from curve-fitted illusions. By splitting your data, tracking risk-adjusted metrics across unseen periods, and progressing through forward testing before committing capital, you dramatically reduce the chance of deploying a strategy that only worked in hindsight.

Start by applying a simple holdout test to your next strategy backtest. If results hold up, move to walk-forward analysis and then paper trading. For a broader look at the full development process, read the complete algorithmic trading guide. Do your own research and testing before trading live—out of sample validation is one step in the process, not the whole process.

Want to dig deeper? Read our complete guide to futures strategy development and backtesting for more detailed setup instructions and strategies.

References

- CFA Institute - "Backtesting" and "Data Mining Bias in Financial Analysis"

- CME Group - Introduction to Futures Education

- Investopedia - Out-of-Sample Testing Definition

- CFTC - Futures Market Basics

Disclaimer: This article is for educational purposes only. It is not trading advice. ClearEdge Trading executes trades based on your rules; it does not provide signals or recommendations.

Risk Warning: Futures trading involves substantial risk. You could lose more than your initial investment. Past performance does not guarantee future results. Only trade with capital you can afford to lose.

CFTC RULE 4.41: Hypothetical results have limitations and do not represent actual trading. Simulated results may over- or under-compensate for market factors such as lack of liquidity.

By: ClearEdge Trading Team | 29+ Years CME Floor Trading Experience | About Us

Steal the PlaybooksOther TradersDon’t Share

Every week, we break down real strategies from traders with 100+ years of combined experience, so you can skip the line and trade without emotion.