Avoid Curve Fitting: Build Robust Algorithmic Trading Strategies

Stop trading over-optimized strategies that fail live. Use walk-forward optimization and out-of-sample testing to prevent curve fitting in algorithmic trading.

Curve fitting happens when a trading strategy is over-optimized to match historical data so precisely that it fails in live markets. To avoid curve fitting in algorithmic trading, traders use out-of-sample testing, walk-forward optimization, and parameter reduction. This guide covers practical methods to detect overfitting, build robust automated strategies, and validate system performance before risking real capital.

Key Takeaways

- Curve fitting occurs when a strategy has too many parameters tuned to past data, producing backtests that look great but fail forward

- Walk-forward optimization tests strategies across rolling time windows to confirm they work outside their training data

- Limiting your strategy to 3-5 adjustable parameters reduces overfitting risk substantially

- Out-of-sample testing on data your strategy has never seen is the single most reliable overfitting check

- Robust strategies show consistent (not perfect) results across multiple market conditions and instruments

Table of Contents

- What Is Curve Fitting in Algorithmic Trading?

- Why Does Overfitting Happen So Often?

- How to Detect Curve Fitting in Your Strategy

- Out-of-Sample Testing: Your First Line of Defense

- How Walk-Forward Optimization Prevents Overfitting

- Building Robust Strategies That Survive Live Markets

- Common Curve Fitting Mistakes to Avoid

- Frequently Asked Questions

- Conclusion

What Is Curve Fitting in Algorithmic Trading?

Curve fitting is the process of over-optimizing a trading strategy's parameters to match historical data so tightly that the strategy loses its ability to perform on new, unseen data. Think of it like memorizing answers to a specific test rather than learning the subject. The strategy "knows" the past but can't handle the future.

Curve Fitting (Overfitting): When a trading model's parameters are excessively tuned to historical price data, capturing noise and random patterns instead of genuine market behavior. This produces artificially inflated backtest results that don't translate to live trading.

Here's what makes this problem so dangerous: a curve-fit strategy often looks spectacular on paper. The equity curve goes up smoothly, the win rate is high, and drawdowns appear manageable. But those results are an illusion. The strategy memorized specific price patterns that already happened rather than identifying repeatable market behavior.

According to research published by the CFA Institute, the majority of backtested trading strategies that show positive returns fail to deliver similar results in live trading [1]. A primary reason is overfitting. This problem affects traders using everything from simple moving average crossovers to complex algorithmic trading systems. If you've ever optimized a strategy to produce a beautiful backtest only to watch it fall apart with real money, curve fitting was likely the cause.

Why Does Overfitting Happen So Often?

Overfitting happens because optimization tools make it easy to keep tweaking parameters until the backtest looks perfect. The more parameters you add and the more precisely you tune them, the higher the risk that your strategy is fitting to noise rather than signal.

Several factors drive this:

Too many parameters. A strategy with 15 adjustable inputs can be tuned to match almost any historical dataset. Each additional parameter gives the optimizer another degree of freedom to shape results. A strategy using a moving average length, an RSI threshold, a volatility filter, a time-of-day filter, a day-of-week filter, and separate stop/target levels for each day has so many knobs that some combination will always produce a great-looking backtest. That doesn't mean the combination has predictive value.

Small sample sizes. Running an optimization on three months of 5-minute ES futures data gives you a limited number of trades. With only 50-100 trades in your sample, random clustering of wins can create the appearance of an edge. Academic literature generally suggests a minimum of 200-300 trades for statistical confidence in strategy results [2].

Data snooping bias. Every time you look at results and go back to adjust your strategy, you're leaking information from the test data into your design decisions. After 20 iterations of tweaking and retesting on the same data, you've effectively seen the answers before taking the test.

Data Snooping Bias: The statistical error that occurs when the same dataset is used repeatedly for strategy development and testing. Each iteration increases the chance of finding patterns that are random rather than genuine.

Confirmation bias. Traders naturally want their strategy to work. When an optimization produces good results, there's a psychological pull to accept those results without sufficient scrutiny. This is where trading psychology intersects with strategy development. The desire for a winning system can override disciplined validation.

How to Detect Curve Fitting in Your Strategy

A curve-fit strategy typically shows specific warning signs that distinguish it from a genuinely robust system. Recognizing these red flags early saves you from deploying a broken strategy with real capital.

Suspiciously perfect backtests. If your equity curve has almost no drawdowns and an unrealistically high Sharpe ratio (above 3.0 for daily data), be skeptical. Real markets produce messy results. A strategy showing 90%+ win rates on futures with consistent daily profits has almost certainly been overfit.

Parameter sensitivity. This is one of the most reliable tests. Change a single parameter by 10-20% and rerun the backtest. If performance collapses, the strategy depends on a fragile combination of exact values rather than a genuine edge. Robust strategies show gradual performance degradation when parameters shift, not a cliff.

Checklist: Curve Fitting Warning Signs

- Backtest Sharpe ratio above 3.0 on daily returns

- Win rate above 80% on a trend-following strategy

- More than 5-6 optimizable parameters

- Performance drops more than 50% when any single parameter changes 15%

- Strategy only works on one specific instrument and timeframe

- Backtest period is less than 2 years or fewer than 200 trades

- Strategy was developed and tested on the same data without holdout

- Rules include highly specific values (e.g., "sell at 2:37 PM on Tuesdays")

Cross-instrument testing. If your ES futures strategy completely fails on NQ, that's a concern. While different instruments have different characteristics, a genuine mean-reversion or trend-following edge should show at least some positive results across correlated markets. An approach that only works on one contract during one time period is almost certainly overfit.

Out-of-Sample Testing: Your First Line of Defense

Out-of-sample testing means reserving a portion of your historical data that the strategy never sees during development or optimization. You build and optimize on the in-sample data, then validate on the out-of-sample data exactly once.

Out-of-Sample (OOS) Testing: Evaluating a strategy on historical data that was completely excluded from the development and optimization process. The OOS period acts as a proxy for how the strategy might perform on future data it hasn't encountered.

A common split is 70% in-sample for development and 30% out-of-sample for validation. If you have five years of data, you'd develop on the first 3.5 years and test on the final 1.5 years. The order matters here. Your out-of-sample period should be the most recent data, since that best approximates the market conditions you'll actually trade in.

Here's the thing that trips people up: you only get one shot at out-of-sample testing. If you test on the holdout data, don't like the results, go back and modify the strategy, then retest on the same holdout data, you've contaminated it. That data is no longer "out of sample" because your decisions were influenced by its results. At that point, you need fresh data to validate again.

For futures traders, this is straightforward to implement. Most backtesting platforms let you specify date ranges. Set your optimization period, lock it down, then run a single validation test on the holdout period. If performance degrades by more than 30-40% compared to in-sample results, overfitting is likely present. Some degradation is normal and expected. Total collapse means the strategy was curve-fit.

How Walk-Forward Optimization Prevents Overfitting

Walk-forward optimization (WFO) is a structured process that repeatedly optimizes a strategy on a rolling window of data, then tests it on the immediately following period. It's the closest thing to simulating real-time strategy adaptation using historical data.

Walk-Forward Optimization: A validation method that divides historical data into sequential segments, optimizes the strategy on each segment, then tests on the next unseen segment. The combined out-of-sample results across all segments indicate whether the strategy adapts to changing conditions or relies on curve-fit parameters.

Here's how it works in practice. Say you have five years of NQ futures data:

- Optimize on Year 1. Test on the first half of Year 2.

- Optimize on Year 1 through the first half of Year 2. Test on the second half of Year 2.

- Continue rolling forward, always optimizing on a growing (or fixed-length) window and testing on the next unseen period.

- Stitch together all the out-of-sample test results into a single equity curve.

That combined OOS equity curve tells you what would have happened if you'd periodically re-optimized your strategy and traded it forward. If the combined OOS results are reasonably close to the in-sample results (within 40-60% of in-sample performance), the strategy has genuine adaptive qualities. If the OOS results are flat or negative while in-sample results look great, you've been fitting to noise.

Walk-forward optimization is especially relevant for strategies that use adaptive algorithms or regime switching logic. These approaches inherently re-calibrate their parameters, and WFO tests whether that recalibration process actually works or just chases past patterns. If you're developing advanced algorithmic strategies, WFO should be a standard part of your development workflow.

One practical note: the ratio between optimization window and test window matters. A common starting point is an optimization window of 12 months with a test window of 3 months (4:1 ratio). Shorter test windows give you more data points but may not capture enough trades per window for meaningful results.

Building Robust Strategies That Survive Live Markets

Robust strategies produce consistent (not perfect) returns across multiple market conditions, instruments, and time periods. Building them requires deliberate choices during the development process that prioritize generalizability over backtest performance.

Limit parameters to 3-5. Every additional parameter is an additional opportunity to overfit. Some of the most durable algorithmic strategies in futures markets use remarkably few inputs. A simple breakout system with an entry threshold, a stop distance, and a target ratio has three parameters. That's harder to overfit than a system with 12.

Use parameter ranges, not point estimates. Instead of optimizing to find the single "best" moving average length (say, 47 periods), look for parameter ranges where the strategy performs well. If the strategy works with moving averages from 40-55 periods but fails everywhere else, you have a narrow parameter island. If it works from 20-80 periods with gradually changing performance, you have a robust parameter plateau. Trade strategies that sit on plateaus, not islands.

Test across multiple market conditions. Your strategy should encounter bull markets, bear markets, range-bound periods, and high-volatility events like FOMC announcements and CPI releases. A strategy optimized only on the low-volatility period of 2017 will likely break during conditions like early 2020 or 2022. Ensure your backtest data includes at least 2-3 distinct market regimes.

Apply Monte Carlo simulation. Randomize the order of your trades and run the simulation thousands of times. This shows you the range of possible outcomes rather than the single historical sequence. If your strategy survives 95% of Monte Carlo runs without hitting your maximum drawdown threshold, it's more likely to survive live trading.

Forward test before going live. After passing all backtesting validation, run your strategy in a simulated or paper-trading environment for a minimum of 30-60 trading days. Forward testing in real-time market conditions is the final check before risking capital. Platforms that support paper trading make this step straightforward.

Parameter Optimization: The process of systematically testing different input values to find settings that produce the best strategy performance. When done carelessly, this leads to curve fitting. When done with proper validation (OOS testing, WFO), it helps identify genuinely useful parameter ranges.

Common Curve Fitting Mistakes to Avoid

Optimizing on the entire dataset. If you use all available data for optimization with nothing held back for validation, you have no way to tell whether results are real or overfit. Always reserve at least 25-30% of data for out-of-sample testing.

Adding rules to fix individual losing trades. After seeing a backtest, some traders add filters specifically to eliminate certain losses. "Don't trade on the third Thursday of the month" or "skip trades when the 73-period RSI is above 62." These rules fix the past but add fragile complexity. Each one increases your parameter count and overfitting risk.

Ignoring transaction costs. A strategy that shows profit before accounting for commissions, slippage, and exchange fees may be unprofitable in live trading. For ES futures, round-trip costs typically run $4-6 per contract including commissions and slippage. For a scalping strategy taking 20 trades per day, that's $80-120 in costs that must be overcome. See our guide on slippage and execution costs for realistic estimates.

Optimizing across too many degrees of freedom simultaneously. Optimizing entry parameters, exit parameters, position sizing, time filters, and volatility filters all at once creates an enormous parameter space. Optimize in stages: get the core entry/exit logic working first, then add filters one at a time, validating at each step.

Frequently Asked Questions

1. What is the difference between curve fitting and legitimate optimization?

Legitimate optimization finds parameter ranges where a strategy works across varied conditions. Curve fitting finds the single "perfect" setting for one specific historical period. The distinction comes down to validation: proper optimization includes out-of-sample testing to confirm results hold on unseen data.

2. How many trades do I need for a statistically valid backtest?

Most statistical literature recommends a minimum of 200-300 trades for meaningful confidence in strategy metrics [2]. Fewer trades increases the chance that results reflect random clustering rather than a genuine edge.

3. Can automated trading platforms help prevent curve fitting?

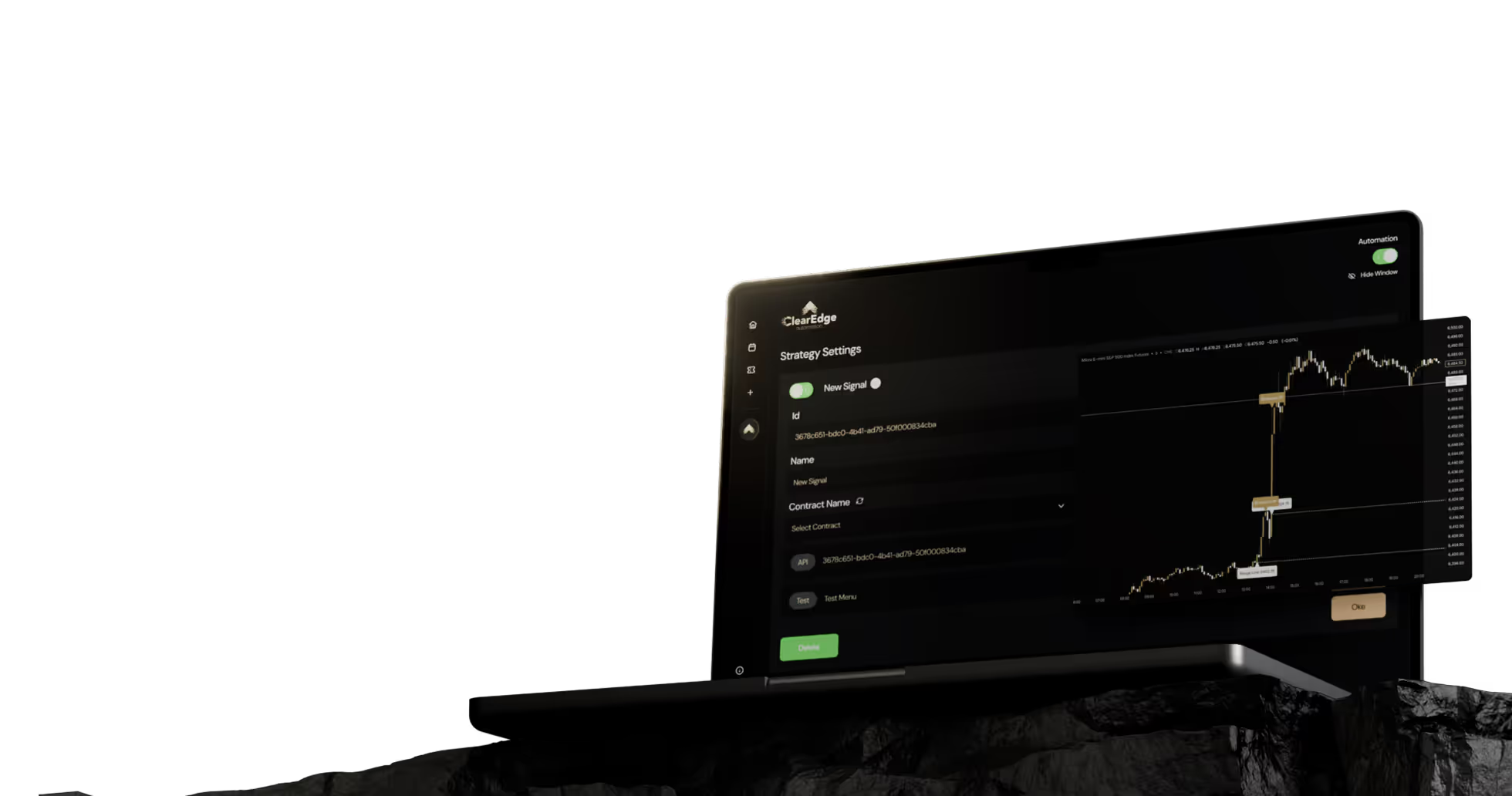

Automation platforms execute strategies consistently, but they don't prevent overfitting during the development phase. That responsibility falls on the trader. What automation does help with is forward testing, since platforms like ClearEdge Trading let you paper trade strategies in real-time conditions before going live.

4. Does walk-forward optimization guarantee a robust strategy?

No. Walk-forward optimization is a strong validation tool, but it's not a guarantee. A strategy can pass WFO and still fail if market structure changes fundamentally. WFO should be one of several validation steps, not the only one.

5. How often should I re-optimize my strategy parameters?

There's no universal answer, but quarterly re-optimization is a common starting point for futures strategies. The goal is to balance adaptation with stability. Re-optimizing too frequently can itself become a form of curve fitting to recent data.

Conclusion

Avoiding curve fitting in algorithmic trading comes down to disciplined validation: use out-of-sample testing, walk-forward optimization, limited parameters, and forward testing to confirm your strategy works on data it hasn't seen. No single technique eliminates overfitting risk entirely, but combining these methods gives you the best chance of building strategies that survive real market conditions.

Start by reviewing any existing strategies against the warning signs checklist above. If a strategy fails basic parameter sensitivity tests or collapses on out-of-sample data, go back to the drawing board rather than trading it live. For more on developing and validating automated strategies, read our complete algorithmic trading guide.

Want to dig deeper? Read our complete guide to advanced automated trading strategies for more detailed setup instructions and validation workflows.

References

- CFA Institute - Backtesting and Simulation in Strategy Development

- CME Group - Introduction to Futures Trading

- CFTC - Consumer Education Center

- Investopedia - Overfitting Definition

Disclaimer: This article is for educational purposes only. It is not trading advice. ClearEdge Trading executes trades based on your rules; it does not provide signals or recommendations.

Risk Warning: Futures trading involves substantial risk. You could lose more than your initial investment. Past performance does not guarantee future results. Only trade with capital you can afford to lose.

CFTC RULE 4.41: Hypothetical results have limitations and do not represent actual trading. Simulated results may not account for the impact of certain market factors such as lack of liquidity.

By: ClearEdge Trading Team | 29+ Years CME Floor Trading Experience | About Us

Steal the PlaybooksOther TradersDon’t Share

Every week, we break down real strategies from traders with 100+ years of combined experience, so you can skip the line and trade without emotion.