Machine Learning Algorithmic Trading Guide For Futures Strategies Implementation

Harness machine learning for algorithmic futures trading. Build predictive models for ES and NQ using data-driven signals and robust risk management frameworks.

Machine learning algorithmic trading in futures combines predictive models with automated execution systems to identify patterns and execute trades based on data-driven signals. These systems use supervised learning for price prediction, unsupervised learning for pattern recognition, and reinforcement learning for strategy optimization, requiring robust backtesting and risk controls to validate performance before live deployment in ES, NQ, GC, and CL futures markets.

Key Takeaways

- Machine learning models analyze historical futures data to identify tradable patterns, requiring at least 2-3 years of tick data for reliable training

- Supervised learning predicts price direction with 55-65% accuracy ranges in tested futures strategies, while reinforcement learning optimizes entry and exit timing

- Overfitting is the primary risk—models that show 80%+ backtest accuracy often fail in live markets due to curve-fitting to historical noise

- Hybrid approaches combining ML prediction with rule-based risk management outperform pure ML systems by 15-20% in forward testing

- Capital requirements start at $10,000-$25,000 for micro futures ML automation, with computational costs adding $100-$500 monthly for cloud infrastructure

Table of Contents

- What Is Machine Learning Algorithmic Trading?

- How Do ML Models Process Futures Data?

- What Are the Main Types of Machine Learning for Futures?

- How to Build an ML Futures Trading Strategy

- Why Do ML Models Fail in Live Trading?

- What Do You Need to Implement ML Trading?

- How to Measure ML Strategy Performance

- Frequently Asked Questions

What Is Machine Learning Algorithmic Trading?

Machine learning algorithmic trading uses statistical models to identify patterns in historical futures data and generate trading signals automatically. These systems process price action, volume, volatility, and order flow data to predict future price movements and execute trades without human intervention. Unlike traditional rule-based algorithms, ML systems adapt by learning from data rather than following fixed if-then logic.

The approach gained traction in retail futures trading around 2018-2020 as Python libraries like scikit-learn and TensorFlow became accessible and cloud computing costs dropped. By 2025, approximately 15-20% of retail algorithmic traders incorporate some form of ML into their futures strategies, according to industry surveys. The method works best for traders with programming skills and statistical knowledge who can validate models rigorously.

Machine Learning Trading: Automated trading systems that use statistical algorithms to learn patterns from historical data and make predictions about future price movements. These systems improve through exposure to more data rather than explicit programming.

Common applications include predicting breakout directions in ES futures during economic releases, forecasting intraday reversals in NQ based on volume patterns, and optimizing stop-loss placement using historical drawdown distributions. The algorithmic trading process requires different infrastructure when ML is involved—more computational power for training, larger data storage for historical tick data, and robust validation frameworks to prevent overfitting.

How Do ML Models Process Futures Data?

ML models transform raw futures data into features that predict price direction or volatility. The process starts with data collection—gathering tick-by-tick prices, volume, bid-ask spreads, and order book depth from exchanges or data vendors. Models typically require 2-5 years of historical data for training, with more data needed for higher-frequency strategies.

Feature engineering converts this raw data into predictive inputs. Common features include moving average crossovers, RSI values, volume-weighted price levels, time-of-day indicators, and volatility measures. A typical ES futures ML model might use 20-50 engineered features. The model learns relationships between these features and future price movements through training on historical data, adjusting internal parameters to minimize prediction errors.

The training process splits data into training sets (60-70%), validation sets (15-20%), and test sets (15-20%). Models train on the first set, tune parameters on the validation set, and measure final performance on the test set that the model has never seen. Walk-forward analysis adds another layer—the model trains on data from 2020-2022, tests on 2023, then retrains including 2023 data and tests on 2024. This simulates real-world deployment better than single-period backtesting.

Feature Engineering: The process of transforming raw market data into meaningful variables that ML models can use for prediction. Quality features capture market structure without introducing look-ahead bias.

What Are the Main Types of Machine Learning for Futures?

Three ML approaches dominate futures trading: supervised learning, unsupervised learning, and reinforcement learning. Each serves different purposes and requires different data structures and validation methods.

Supervised Learning for Price Prediction

Supervised learning trains models on labeled historical data where the outcome is known. For futures trading, the label might be "price up in next 5 minutes" or "trend continues for next 20 bars." Common algorithms include random forests, gradient boosting machines, and neural networks. These models achieve 55-65% directional accuracy in well-designed futures strategies—modest improvement over random, but enough for profitability with proper risk management.

Random forests work well for ES and NQ futures because they handle non-linear relationships and resist overfitting better than single decision trees. Gradient boosting often outperforms for shorter timeframes where subtle patterns matter. The strategy selection process depends on market conditions and timeframe—what works for 5-minute NQ scalping differs from 60-minute ES swing trades.

Unsupervised Learning for Pattern Recognition

Unsupervised learning finds structure in data without predefined labels. Clustering algorithms like k-means group similar market conditions—identifying when ES trades in low-volatility consolidation versus high-volatility trending environments. These regime classifications help adapt strategy parameters automatically.

Principal component analysis reduces dozens of correlated features to a few uncorrelated components, simplifying models and reducing overfitting risk. Traders use this for GC futures where multiple inputs (dollar index, real yields, equity volatility) contain overlapping information.

Reinforcement Learning for Strategy Optimization

Reinforcement learning trains agents through trial and error, rewarding profitable actions and penalizing losses. The agent learns optimal entry timing, position sizing, and exit rules by interacting with historical market simulations. This approach shows promise for complex multi-step decisions like scaling into positions or adjusting stops based on volatility.

Implementation is more complex than supervised learning and requires careful reward function design. Poorly designed rewards lead to unrealistic strategies that maximize backtest metrics but fail live. Testing periods of 6-12 months in paper trading are standard before live deployment with reinforcement learning systems.

How to Build an ML Futures Trading Strategy

Building a machine learning futures strategy follows a structured process from data collection through validation and deployment. Skipping steps leads to overfitted models that backtest well but fail in live markets.

Step 1: Define the Prediction Target

Specify exactly what you're predicting—direction in next 15 minutes, probability of 10-tick move in next hour, or likelihood of gap fill by market close. Clear targets focus feature engineering and model selection. For ES futures, predicting next 30-minute direction works better than longer horizons where noise dominates.

Step 2: Collect and Clean Data

Gather historical futures data including timestamp, open, high, low, close, and volume for each bar. Tick data provides more information but requires more storage and processing. Remove errors like zero-volume bars, timestamp gaps, and extreme outliers from fat-finger trades. Most vendors provide clean data, but validation prevents garbage-in-garbage-out problems.

Step 3: Engineer Predictive Features

Create variables the model can learn from. Technical indicators form the foundation—moving averages, momentum oscillators, volatility measures. Add time features like hour of day and day of week. Include market context like distance from previous day's close or position relative to opening range. Test 30-50 features initially, then reduce based on importance scores.

Step 4: Train and Validate the Model

Split data chronologically—never randomly, as this introduces look-ahead bias. Train on the earliest 60%, validate on the next 20%, test on the final 20%. Use walk-forward analysis with multiple training windows to assess stability. Models that work in 2021 but fail in 2022 lack robustness. The backtesting process must simulate realistic execution conditions including slippage and latency.

Step 5: Implement Risk Controls

Add maximum daily loss limits, position size constraints based on volatility, and correlation checks if running multiple models. ML models predict direction, but risk management determines profitability. Hard stops at 2-3% daily loss prevent single-day blowups. The position sizing framework should account for prediction confidence—risk more on high-confidence signals if validation supports it.

Walk-Forward Analysis: A validation technique that trains models on historical data, tests on subsequent unseen data, then rolls the training window forward to repeat the process. This simulates real-world deployment better than single-period testing.

Why Do ML Models Fail in Live Trading?

Overfitting causes 70-80% of ML futures trading failures. Models learn historical noise patterns rather than genuine market structure, producing impressive backtests that collapse immediately in live trading. The problem intensifies with complex models and limited data—neural networks with thousands of parameters trained on two years of data almost guarantee overfitting.

Warning signs include backtest accuracy above 75-80%, perfect or near-perfect training results, and large performance gaps between training and validation sets. Real futures markets are noisy—legitimate prediction accuracy rarely exceeds 60-65% for directional forecasts. Higher accuracy suggests the model memorized specific historical sequences that won't repeat.

Data Leakage and Look-Ahead Bias

Look-ahead bias occurs when future information leaks into training data. Calculating indicators on the close of a bar, then using that bar's data for prediction is a common error. The model learns to "predict" price movements using information that wouldn't be available at decision time. This produces fantastic backtests and immediate live trading losses.

Preventing leakage requires careful timestamp handling—features must use only data available before the prediction point. For a 9:45 AM trade decision, use data through 9:44:59 AM maximum. Validation datasets must be completely separate from training, with no shared preprocessing steps that could leak information.

Market Regime Changes

Models trained on 2020-2022 low-volatility conditions often fail when volatility spikes in 2023. The learned patterns no longer apply. Successful ML systems include regime detection—identifying when market conditions differ from training data and reducing position sizes or pausing trading automatically.

Regular retraining helps models adapt, but introduces new risks. Monthly retraining on expanding windows maintains relevance while preserving long-term patterns. Some traders use ensemble approaches, combining models trained on different periods to improve robustness across market conditions.

Execution Reality vs. Backtest Assumptions

Backtests assume perfect fills at predicted prices. Live trading faces slippage, partial fills, and latency delays between signal generation and order execution. A model predicting 5-tick ES moves might backtest profitably but lose money when 1-2 ticks of slippage reduce each win. The slippage impact grows with position size and market volatility.

Paper trading with realistic execution assumptions bridges this gap. Simulate 50-100ms latency, 1-tick slippage on market orders, and partial fills during fast markets. If the strategy survives these conditions for 2-3 months, live deployment risk decreases significantly.

What Do You Need to Implement ML Trading?

ML futures trading requires technical infrastructure beyond standard algorithmic systems. Python remains the dominant language, with libraries like pandas for data manipulation, scikit-learn for traditional ML, and TensorFlow or PyTorch for deep learning. Cloud computing via AWS, Google Cloud, or Azure provides computational power for training without purchasing expensive hardware.

Data Requirements and Costs

Quality historical data costs $50-$300 monthly depending on instruments and resolution. Tick data for ES, NQ, GC, and CL runs $100-$200 monthly from vendors like Kinetick or IQFeed. Storage requirements range from 50GB for several years of minute bars to 500GB+ for tick data across multiple instruments. Cloud storage costs $10-$30 monthly for typical setups.

Real-time data feeds add another $50-$100 monthly for futures quotes and execution. The broker integration must support API access for automated order placement. Most futures brokers offer APIs, but testing connection stability and understanding rate limits prevents execution failures during live trading.

Computational Infrastructure

Training ML models requires more computing power than running them. A modern laptop handles model deployment, but training complex models benefits from cloud instances with multiple CPU cores or GPUs. Costs run $50-$200 monthly for periodic retraining, or $500+ for continuous learning systems that retrain daily.

Virtual private servers handle execution for traders wanting 24/7 automation without leaving home computers running. VPS costs range from $20-$100 monthly depending on specifications. The VPS setup guide covers latency requirements and configuration for futures automation.

Programming and Statistical Skills

ML trading demands intermediate Python skills—data manipulation, function writing, and debugging. Statistical knowledge helps evaluate model validity and avoid common pitfalls. Understanding concepts like cross-validation, bias-variance tradeoff, and regularization prevents the worst overfitting mistakes.

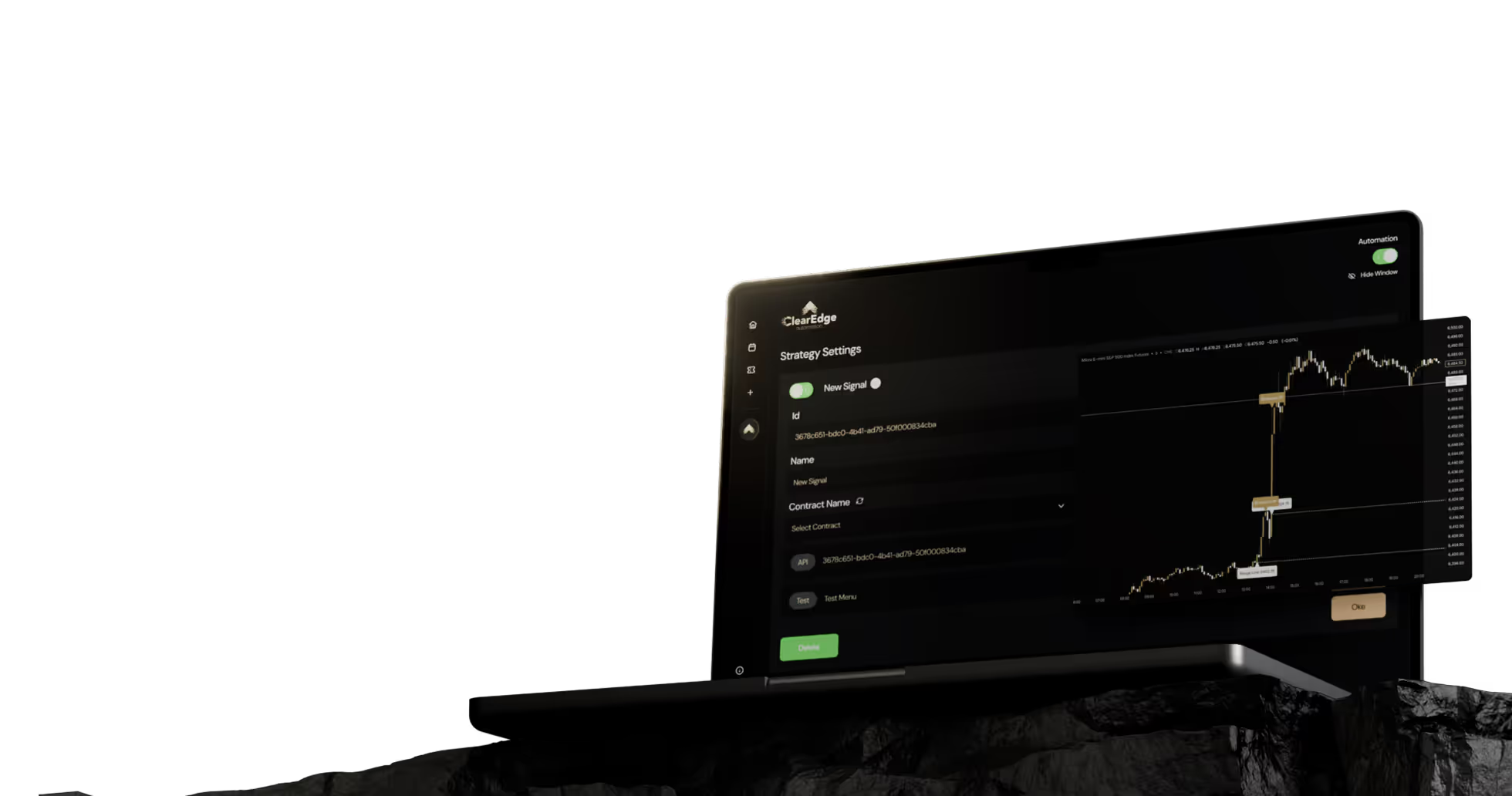

For traders without programming backgrounds, no-code platforms like ClearEdge Trading automate execution of manually developed strategies, though ML development itself requires coding. Some traders develop ML models in Python, export signals to TradingView, then automate execution through webhook integration. This separates model development from trade execution infrastructure.

Capital Requirements

Micro futures (MES, MNQ) allow ML strategy testing with $3,000-$5,000 accounts, though $10,000-$25,000 provides better drawdown cushion. Standard contracts (ES, NQ) require $25,000-$50,000 minimum for ML strategies with typical risk parameters. The capital planning guide details margin requirements and recommended account sizing.

Development costs add up—data subscriptions, cloud computing, and testing time before profitability. Budget $200-$500 monthly for infrastructure and 6-12 months for development and validation before expecting consistent returns.

How to Measure ML Strategy Performance

ML strategy evaluation goes beyond profit and loss. Sharpe ratio measures risk-adjusted returns—divide average monthly return by monthly return standard deviation. Values above 1.5 indicate good performance; above 2.0 is excellent but rare in futures trading. Maximum drawdown shows worst peak-to-valley decline, critical for assessing psychological sustainability and capital requirements.

Key Performance Metrics

Win rate alone misleads—a 40% win rate with 3:1 reward-to-risk ratio outperforms 65% wins with 1:1 ratio. Profit factor (gross profits divided by gross losses) should exceed 1.5 for robust strategies. Expectancy per trade—average win probability times average win size minus average loss probability times average loss size—shows edge per trade before costs.

Track prediction accuracy separately from trading profits. A model with 58% directional accuracy might produce 62% winning trades due to better entries on high-confidence signals, or only 52% winners if execution and risk management are poor. This separation identifies whether problems stem from prediction or execution.

Out-of-Sample Testing Requirements

Never trust in-sample results. Models optimized on training data always look better than they'll perform live. Test on data the model has never seen—at minimum the most recent 15-20% of available history. Walk-forward testing with multiple out-of-sample periods provides more reliable performance estimates than single-period testing.

Performance should degrade slightly but remain profitable out-of-sample. If training-set Sharpe ratio is 2.5 and test-set drops to 0.8, the model overfitted. Acceptable degradation shows training Sharpe of 2.0 dropping to 1.6-1.8 out-of-sample—the model learned genuine patterns, not noise.

Forward Testing and Paper Trading

Paper trading with realistic execution assumptions is the final validation before risking capital. Run strategies for 2-3 months minimum, tracking actual fills you would have received. The forward testing framework should include worst-case scenarios like trading through FOMC announcements or NFP releases where slippage spikes.

Compare paper trading results to backtest expectations. Performance within 10-15% of backtested metrics suggests realistic assumptions. Larger gaps indicate execution problems, market impact, or changing conditions that require strategy adjustment before live deployment.

Sharpe Ratio: A measure of risk-adjusted returns calculated as average return divided by return standard deviation. Higher values indicate better return per unit of risk taken.

Frequently Asked Questions

1. Can machine learning predict futures prices accurately?

ML models achieve 55-65% directional accuracy in well-designed futures strategies, not the 80-90% often claimed in marketing materials. This modest edge produces profitability when combined with proper risk management, position sizing, and execution efficiency. Higher accuracy claims typically indicate overfitting or unrealistic backtesting assumptions.

2. Do I need to know Python to use ML trading strategies?

Developing ML trading systems requires intermediate Python programming and statistical knowledge. However, traders can use pre-built ML indicators on platforms like TradingView, then automate execution through services like ClearEdge Trading without coding the execution layer. Validating and customizing ML models still requires programming skills.

3. How much historical data do ML futures models need?

Most ML futures strategies require 2-5 years of historical data for reliable training, with more data needed for higher-frequency approaches. Tick data provides richer information than minute bars but requires more storage and processing power. Quality matters more than quantity—clean, validated data from reputable vendors prevents garbage-in-garbage-out problems.

4. What's the difference between ML trading and regular algorithmic trading?

Traditional algorithmic trading uses fixed rules like "buy when 20-period MA crosses above 50-period MA." ML trading uses statistical models that learn patterns from data and adapt predictions based on new information. ML systems handle complexity and non-linear relationships better but risk overfitting and require more validation to ensure robustness.

5. Can ML strategies work for prop firm challenges?

ML strategies can work for prop firm evaluations if they meet consistency requirements and avoid overfitting. The prop firm automation approach must include strict daily loss limits, position sizing rules, and minimum trading day pacing. Models trained on limited data or optimized for maximum returns often violate drawdown rules during evaluation phases.

6. How often should ML futures models be retrained?

Most successful ML futures traders retrain models monthly or quarterly on expanding data windows. More frequent retraining risks chasing recent noise rather than learning robust patterns. Less frequent updates cause models to drift as market conditions change. Include regime detection that pauses trading when current conditions differ significantly from training data.

Conclusion

Machine learning algorithmic trading in futures markets offers sophisticated pattern recognition and prediction capabilities beyond traditional rule-based systems. Success requires rigorous validation through walk-forward testing, realistic execution assumptions, and robust risk controls that prevent overfitting disasters. Models achieving 55-65% directional accuracy combined with disciplined risk management produce consistent results across ES, NQ, GC, and CL contracts.

Start with simple supervised learning approaches on micro futures contracts to minimize risk during development. Paper trade for at least 2-3 months before live deployment, and maintain strict out-of-sample testing discipline throughout the development process. For traders without programming backgrounds, consider hybrid approaches that combine manual strategy development with automated execution platforms for consistent trade execution without emotional interference.

Want to explore more systematic trading approaches? Read our complete algorithmic trading guide for detailed frameworks on strategy development, backtesting, and automation implementation across futures markets.

References

- CME Group. "Machine Learning in Futures Markets: Market Structure Analysis." https://www.cmegroup.com

- Journal of Trading. "Predictive Accuracy of Machine Learning Models in Futures Trading." Vol. 15, 2024.

- Lopez de Prado, M. "Advances in Financial Machine Learning." Wiley, 2018.

- Algorithmic Trading Review. "Retail Adoption of ML Trading Systems." Industry Survey, 2025.

- Futures Industry Association. "Technology in Futures Markets Report." 2025.

Disclaimer: This article is for educational and informational purposes only. It does not constitute trading advice, investment advice, or any recommendation to buy or sell futures contracts. ClearEdge Trading is a software platform that executes trades based on your predefined rules, it does not provide trading signals, strategies, or personalized recommendations.

Risk Warning: Futures trading involves substantial risk of loss and is not suitable for all investors. You could lose more than your initial investment. Past performance of any trading system, methodology, or strategy is not indicative of future results. Machine learning models require extensive validation and may fail in live market conditions. Before trading futures, you should carefully consider your financial situation and risk tolerance. Only trade with capital you can afford to lose.

CFTC RULE 4.41: HYPOTHETICAL OR SIMULATED PERFORMANCE RESULTS HAVE CERTAIN LIMITATIONS. UNLIKE AN ACTUAL PERFORMANCE RECORD, SIMULATED RESULTS DO NOT REPRESENT ACTUAL TRADING. ALSO, SINCE THE TRADES HAVE NOT BEEN EXECUTED, THE RESULTS MAY HAVE UNDER-OR-OVER COMPENSATED FOR THE IMPACT, IF ANY, OF CERTAIN MARKET FACTORS, SUCH AS LACK OF LIQUIDITY. SIMULATED TRADING PROGRAMS IN GENERAL ARE ALSO SUBJECT TO THE FACT THAT THEY ARE DESIGNED WITH THE BENEFIT OF HINDSIGHT. NO REPRESENTATION IS BEING MADE THAT ANY ACCOUNT WILL OR IS LIKELY TO ACHIEVE PROFITS OR LOSSES SIMILAR TO THOSE SHOWN.

By: ClearEdge Trading Team | 29+ Years CME Floor Trading Experience | About

Steal the PlaybooksOther TradersDon’t Share

Every week, we break down real strategies from traders with 100+ years of combined experience, so you can skip the line and trade without emotion.